AI Development: Concepts & Models

1. End-to-End (E2E) Architecture: Our Foundational Philosophy

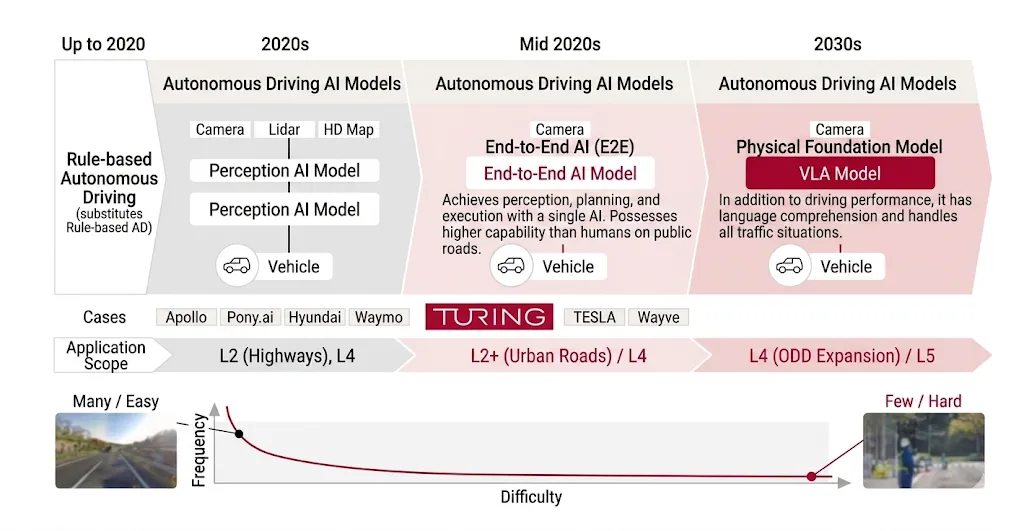

Turing develops autonomous driving AI using End-to-End (E2E) architecture—a single neural network that receives raw video input and directly outputs driving behavior. This contrasts sharply with rule-based approaches: instead of detecting pedestrians, identifying obstacles, and applying programmed rules to avoid them, we let a trained model learn the entire mapping from perception to action.

Why E2E? Because roads present infinite high-difficulty, low-frequency situations. Construction zones, traffic marshals, right-of-way yielding situations, pedestrians who appear about to cross then don’t—these exceptions never end. Humans navigate these scenarios using common sense and contextual judgment. When you attack these through rule expansion and conditional logic, you eventually hit a scaling wall. You can’t solve this by writing more code; the problem isn’t of that form.

Full autonomous driving, in our view, ultimately requires a design where “AI handles everything.” Without this design, full autonomy cannot be achieved.

2. Why Initial Progress Is Slow, But Later Acceleration Is Exponential

E2E development begins slowly. The difficulty to get a system running at all is high. But once data and compute reach sufficient scale, performance accelerates dramatically. As we feed more data and more GPU compute into the system, improvement speed accelerates—the curve becomes exponential.

This dynamic has been our consistent technical premise since Turing’s founding.

3. The Development Core: Improvement Cycles, Not Model Architecture

At first glance, E2E seems simple: feed in video, get out driving trajectory. But actual development is dominated not by the model itself, but by the machinery that develops it.

Turing’s development works through a cycle: collect driving data → train models → deploy to real vehicles → validate on public roads → feed results back into training. Simulation and desk evaluation can’t be the complete story. Only when you run the car on real roads do you see massive differences—gaps spanning what the vehicle can do, cannot do, and can do but not well enough.

We observe these gaps, decide what to improve next, redesign data collection to capture the necessary scenarios, retrain, and deploy again. The speed of this iteration cycle determines development velocity and competitive outcome. Model development isn’t a single-strike game to guess the right answer. It’s the cumulative volume of trial-and-error informed by real-world results.

4. Data-Centric Development: Dataset Design Determines Performance

Turing emphasizes data-centric development. Driving data exists in enormous volume, but raw data can’t train models. We must remove gaps and inconsistencies, restructure the distribution toward what’s learnable, and create specialized datasets for hypothesis testing.

The key insight: data quality isn’t just about volume—it’s about distribution design. Which scenarios are underrepresented? Against which types of difficulty is learning insufficient? By identifying these gaps, targeting our collection, and connecting that data to training, improvement accelerates.

MLOps serves as our “factory” for this work: collecting data, processing it, feeding it to training, and returning results. The faster we spin this factory, the faster our model evolves.

5. Industry Momentum and Second-Mover Advantage

The autonomous driving industry is now moving decisively toward E2E. Top players have demonstrated a fact: using transformer-based large neural networks on vehicles, within the laws of physics, you can reach this level of capability. This is enormously significant for followers: achievability is proven, the goal is visible, and the path upward is clear.

Turing’s strategy is to trace leading players correctly and follow competently. Unlike software markets with winner-take-all dynamics, automotive has manufacturing cycles and replacement cycles—there are viable winning paths for second movers. Rather than over-storytelling, we focus on establishing the preconditions to catch up in real conditions.

6. Frontier Models and On-Vehicle Deployment: The Architecture We’re Building

Full autonomy demands a frontier model—a powerful, driving-specialized foundation model that exists behind the on-vehicle models users directly interact with. This frontier model requires Vision-Language-Action (VLA) capabilities (integrating visual understanding, language processing, and action) and a world model (understanding how the physical world behaves and predicting the future).

Deploying such a massive model directly to vehicles is impractical due to power constraints, thermal dissipation, environmental durability, and real-time latency. So we use world-model-guided learning and knowledge distillation to compress and convert capabilities into deployment-ready on-vehicle models.

This is the architecture Turing is building toward.

7. The Winning Strategy: Complete Commitment to AI and Accumulating Preconditions

Turing’s AI development doesn’t expand rules to follow problems—it bets completely on an AI-trusting design. To make that bet work, we keep spinning GPUs, collecting data, and running improvement cycles.

Full autonomous driving won’t arrive through sudden breakthrough. It arrives through incorporating real-world results, running learning and validation at speed, and accumulating generalization performance over the long term. Turing continues building the structures to win that sustained competition.

Join us :

Take on the challenge of fully autonomous driving

with a diverse team of talented members

from various backgrounds.