MLOps at Turing

The Role of MLOps at Turing

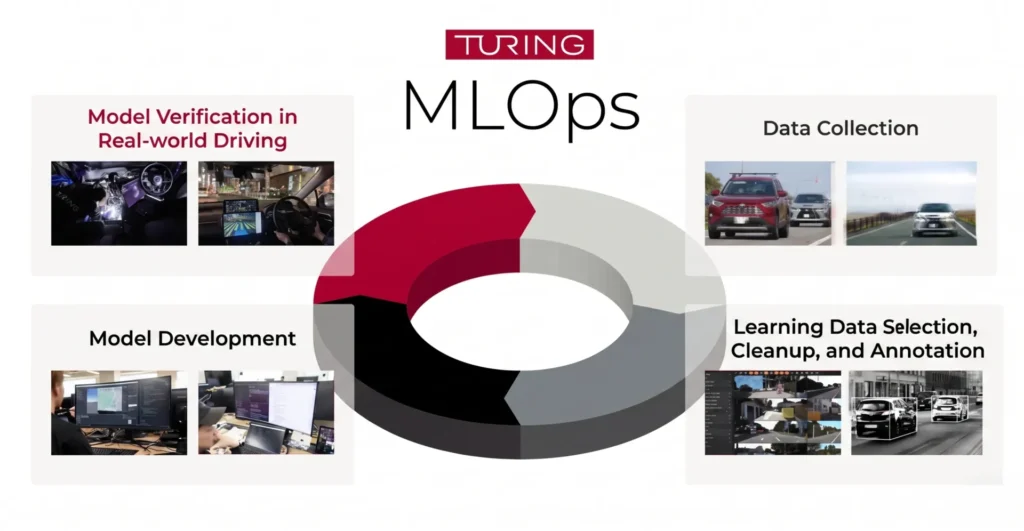

At Turing, MLOps isn’t a supporting function for ML engineers—it’s the central mechanism that advances full autonomous driving. A model’s performance doesn’t exist in isolation—it only matters if that model can be deployed, run, validated, and continuously improved in the real world.

We frame MLOps as our “autonomous driving factory.” The stronger the factory, the higher the experiment velocity, the faster the learning-validation loop, and the greater the total volume of accumulated improvement.

The MLOps Workflow: End-to-End

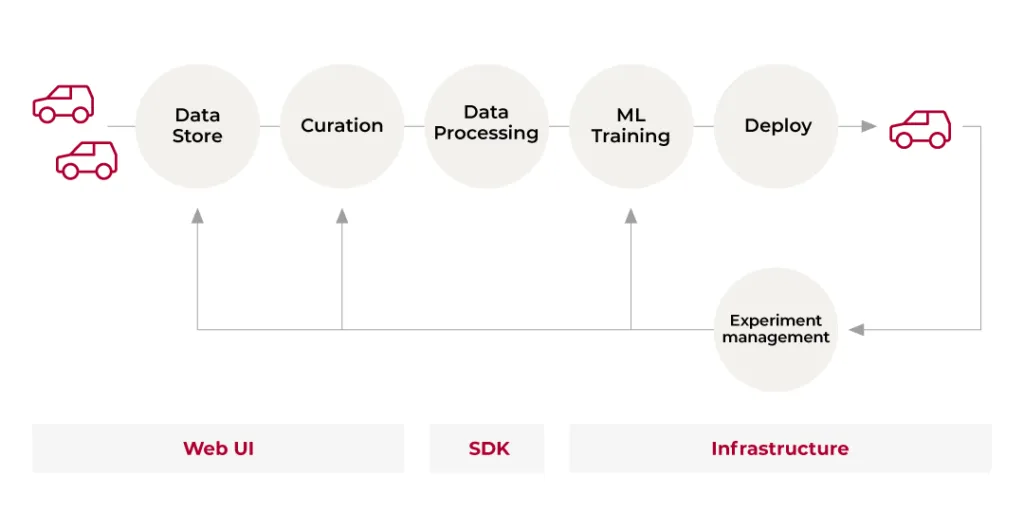

Turing’s MLOps is structured as a continuous workflow spanning six phases: Data Store, Curation, Data Processing, Training, Deployment, and Experiment Management. What makes this distinctive is that vehicles appear at both ends of the workflow—data collection and real-world validation are built into the cycle, not added on top of it.

Step 1 Data Store

Data flows daily from collection vehicles into the data store. The priority is stable ingestion, detection of missing data and quality issues, and reliable storage at scale.

Step 2 Curation

Not all data is used for training. We select data that will be effective for learning: filtering out low-quality samples, adjusting distribution imbalances, and designing coverage across road environments and geographic areas.

Step 3 Data Processing

Selected data is segmented into scenes, sampled, processed through auto-labeling, and converted into training-ready format.

Step 4 Training

Processed datasets feed into model training.

Step 5 Deployment

Trained models are deployed to vehicles and validated through real-world driving.

Step 6 Experiment Management

Experiment management extends beyond training logs. Results from real-world vehicle runs are integrated and evaluated. Cases that didn’t work are fed back into the front of the cycle as “data we need to collect next.” This feedback loop is the core of continuous improvement.

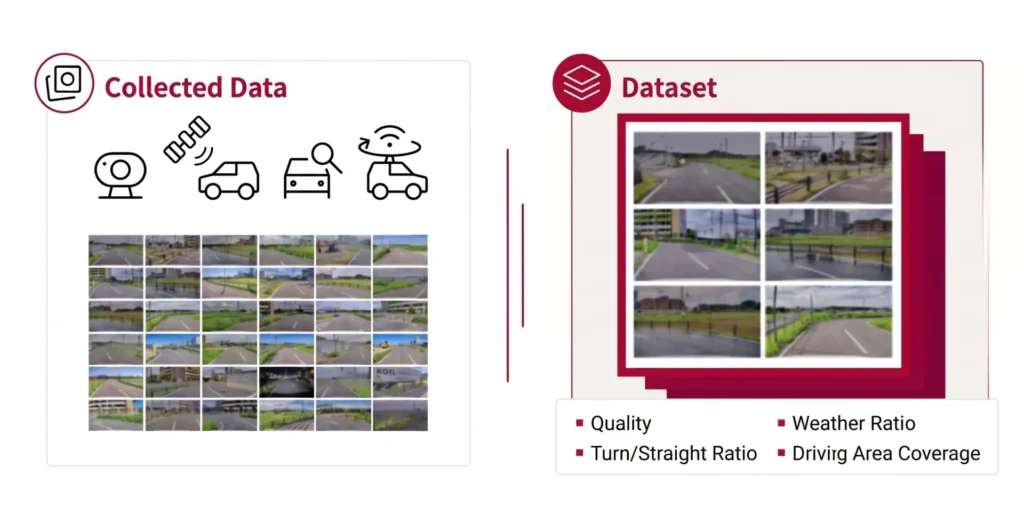

Data Store and Curation: From Collecting to Making Data Usable

Autonomous driving data is largely unstructured and difficult to use for training as-is. At Turing, video, sensor, and vehicle signal data from collection vehicles are aggregated into the data store. The immediate priority is detecting missing data and quality issues, and ensuring stable ingestion operations.

From there, curation becomes critical. To improve training efficiency, we filter out low-quality data while adjusting for distribution imbalances—calibrating ratios of straight driving to turns, and managing geographic and road environment coverage. The goal is designing data that is genuinely effective for learning.

Central to this is metadata—tags that record where, when, and under what conditions each clip was collected: location, time of day, weather, intersection presence, and more. These tags allow us to search and retrieve the right data long after it was collected. Tags are not statically assigned. We also run systems that dynamically redefine tag schemas, so that as our hypotheses evolve, we can slice the data in new ways without recollecting it.

Data Processing: Automating Dataset Creation at Scale

Data Processing carries especially high weight in Turing’s MLOps. In autonomous driving, raw video and sensor logs don’t feed directly into training. They must be segmented into scenes—discrete clips—then sampled and transformed through auto-labeling and other steps into training-ready format.

Tasks that once took days of manual work now run in hours through tooling and cloud execution. This means multiple team members can run experiments at the same pace without depending on a single specialist.

As data scale grows, processing design becomes harder. Increasing scene counts and sampling rates multiply data volume, surfacing bottlenecks, timeouts, and rising costs. We continuously revisit processing methods and execution infrastructure—workflow engines, batch execution, distributed processing—balancing robustness with cost efficiency. MLOps is not a system you build once. It is a domain that requires continuous redesign as scale increases.

Training to Deployment: Closing the Gap Between the Desk and the Road

Model training results cannot be confirmed through desk-based evaluation alone. In autonomous driving ML development, inference results that look good in video can produce lane departures or unstable behavior in real vehicles. At Turing, we deploy trained models to vehicles for validation as a baseline—and we also build evaluation and visualization systems that filter out potentially dangerous models before that stage.

Deployment to real vehicles requires aligning not just model weights, but also pre-processing, post-processing, and training configuration. This makes versioning and packaging a critical part of deployment design. As update frequency increases, the reliability of this process becomes a direct determinant of development velocity.

Experiment Management and Cross-Team Collaboration: Running MLOps as an Organization

MLOps doesn’t run within the MLOps team alone. Data collection, vehicle control, and model development teams are all part of the same cycle. When problems arise, determining whether the root cause lies in ML, vehicle control, or middleware requires cross-team collaboration that cannot be skipped.

This is why building shared language for results—such as being able to instantly view the same driving scene via a video viewer URL—and establishing dashboards that visualize quality, cost, and processing status, along with Slack notifications for anomalies, are all within the scope of MLOps at Turing.

As development progresses and experiment velocity increases, GPU and storage costs grow with it. Making costs visible, detecting anomalies early, and designing guardrails that ensure the right amount of compute is used at the right time are equally important. Integrating technology, operations, and organizational coordination into a single coherent system is what distinguishes Turing’s approach to MLOps.

Join us :

Take on the challenge of fully autonomous driving

with a diverse team of talented members

from various backgrounds.