The Autonomous Driving Market

From Vision to Implementation: A Brief History

The concept of autonomous vehicles dates to 1939, when General Motors displayed the “Highways and Horizons” pavilion at the New York World’s Fair. Its diorama depicted a future city with high-speed “Automated Highway Systems”—a powerful message that future society would automate driving.

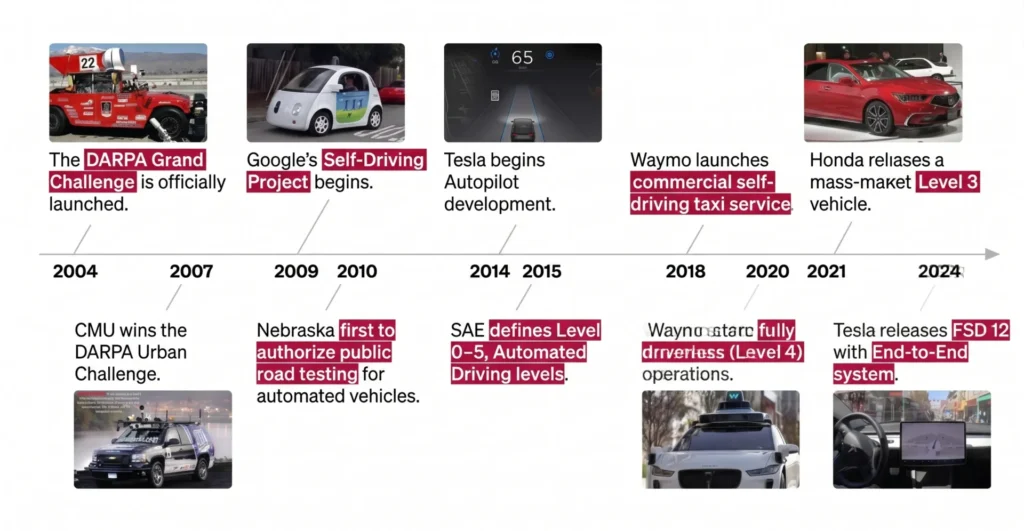

Research institutions worldwide began serious work on autonomous systems, but the real acceleration came in the late 2000s with the DARPA Grand Challenges. This competition used real vehicles and drew universities and research institutions into designing and developing autonomous cars. It attracted significant investment from governments and corporations alike—and the researchers and engineers who competed went on to found companies including Google’s Waymo and other leaders in autonomous driving. By the second half of the 2010s, commercial autonomous driving services had begun operating in limited regions with constrained user conditions.

The Autonomy Level Framework: What It Actually Means

Autonomous driving is commonly described in levels from 1 to 5, but this framework is not a simple sequence of technical advancement. Each level defines where responsibility lies and under what conditions the system is designed to operate. Up to Level 2, the human driver retains final responsibility. At Level 3 and above, the system itself begins to make decisions and bear accountability.

Critically, these levels do not stack neatly toward full autonomy. Expanding the conditions under which conditional automation functions does not naturally lead to full self-driving. In fact, architectures designed around defined conditions can become obstacles to generalization. Targeting full autonomy requires a technical approach that accounts for long-tail real-world scenarios from the outset.

The Limits of Rule-Based Autonomy and the Long-Tail Problem

The difficulty of driving on public roads lies in the infinite variety of low-frequency, high-difficulty situations: construction zones, traffic marshals, unpredictable pedestrian behavior. Human drivers process surrounding information and select actions based on social common sense and context.

Traditional autonomous driving development divided the problem into perception, prediction, planning, and control—each governed by detailed rules. But as edge cases multiply, the rules proliferate, and overall system complexity grows. The result is escalating development and validation costs, and increasing difficulty in generalization.

End-to-End Architecture and the Generative AI Shift

As a response to these challenges, End-to-End (E2E) autonomous driving—training a single neural network from raw sensor inputs to driving outputs—has gained prominence. Rather than hand-engineering rules for every scenario, this approach acquires judgment capability through data and learning. While it requires large volumes of driving data and substantial compute, it enables higher generalization performance against long-tail situations.

Recent advances in generative AI have further demonstrated that capabilities like “organizing complex information” and “making judgments that incorporate context and common sense” can be acquired through learning. These capabilities align directly with what driving demands, and they represent a significant shift in the foundational conditions for autonomous driving development. The primary battlefield in autonomous driving research is moving from vehicle engineering toward AI and machine learning.

Technologies Enabling Context and Prediction

Achieving full autonomy requires more than recognizing the current environment. It demands organizing “what matters and what doesn’t,” then predicting how the situation will evolve. Vision-language integration models and vision-language-action learning frameworks have become central tools for this—going beyond simple object detection to support the contextual understanding that driving requires.

World models that internally simulate future states, and 3D Gaussian Splatting techniques that represent three-dimensional space with high fidelity, also play important roles. Together, these enable learning and validation for scenarios that are rare in the real world but critical when they occur. The combination of contextual understanding, future prediction, and spatial reasoning defines the technical direction toward safer and more generalizable driving judgment.

The Competitive Structure: Data, Compute, and Iteration Rate

In the generative AI era of autonomous driving, competitive advantage does not come from a single clever algorithm or model. What matters is whether you can continuously collect sufficient data of the right quality, whether you can secure the compute to process and train on it, and how fast you can run the improvement cycle.

When data, compute, and development infrastructure align, learning-driven performance gains become real. This is why investment in MLOps and compute infrastructure has become a prerequisite for competitive standing in the autonomous driving market. Market attention is shifting from one-off demos and isolated successes to the question of whether a company has the structural capacity for continuous improvement.

Where the Autonomous Driving Market Stands Today

The autonomous driving market is shifting its center of gravity from automation under limited conditions toward more generalized autonomous operation. Addressing long-tail scenarios, contextual understanding, future prediction, and explainable safety are all prerequisites for that transition—none of which are short-cycle problems. They demand long-term technical investment and accumulated improvement.

E2E learning and generative AI-driven approaches are positioned as realistic options for these challenges. The autonomous driving market will continue its cycles of calibration and expectation, but the underlying direction—the transfer of driving responsibility from human to AI—has not changed. We are now entering the stage of building the technology and infrastructure needed to make that transition real.

Join us :

Take on the challenge of fully autonomous driving

with a diverse team of talented members

from various backgrounds.