Challenges Ahead & Future Outlook

The Compute Imperative: GPU as Prerequisite

Full autonomous driving development depends fundamentally on compute capacity. End-to-End systems require training massive neural networks, continuously evaluating their performance, and iterating at speed. As a result, GPUs are not merely development tools—they are the resource that determines the pace of technical progress and organizational growth.

The challenge ahead is not simply acquiring more hardware. What is required is the full stack: operations capable of sustaining large-scale training reliably; system design that does not slow learning and evaluation cycles no matter how much compute is deployed; and the infrastructure to convert compute into measurable performance gains. Without sufficient compute, even the most talented engineers cannot maintain a fast improvement cycle. With sufficient compute but weak operations and design, the gains never materialize.

Continuous access to compute, and the development infrastructure to use it fully, will remain a central theme going forward.

The Long Game of Data Collection

What autonomous driving ultimately requires is not better eyes, but a better mind. And that mind can only develop on a foundation of better data.

Building long-tail capability demands continuous collection of high-quality data in sufficient volume. The challenge is not simply increasing data quantity—it lies in identifying which distributions are underrepresented, deliberately targeting those gaps, and building a pipeline that connects collected data to training.

Data alone does not create value. It must be structured for learning, made evaluable, and integrated into the improvement cycle. Data collection, dataset preparation, and model development must operate as one. Looking ahead, the work of capturing long-tail scenarios will expand beyond real-world driving data to include simulation and synthetic data. How to continuously grow high-quality data—this is one of the most demanding aspects of technical progress, and an unavoidable challenge going forward.

Continuously Building the Right Team

Full autonomous driving sits at the intersection of software and the physical world. No component can be missing—model, data, infrastructure, on-vehicle integration, and validation must all work together. This demands both breadth and depth of specialized capability across the organization.

Building and retaining the right people—and not allowing the organization’s learning velocity to slow—is one of the central themes ahead. The challenge is not simply headcount. Given the foundational role of compute and data, growing the organization is not a matter of “just hire more people.” Compute and development infrastructure must be in place before the value of talented people can be fully realized. Conversely, when talented people come together, they operate the compute and data foundations more powerfully.

GPU, data, and people are not independent variables—they are mutually dependent. This is why continuously building the right team is positioned as a foundational driver of both technical and business progress.

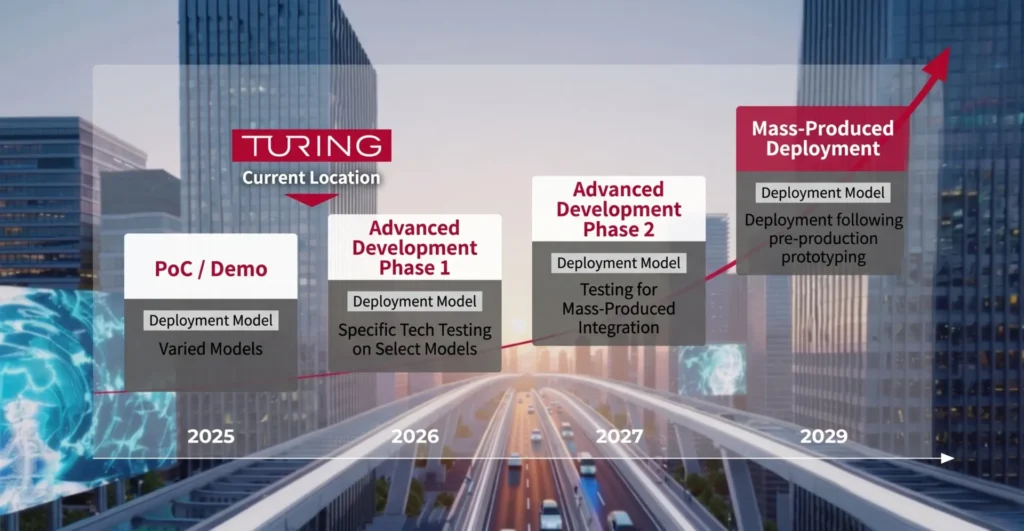

On-Vehicle Integration and the Path to Mass Production

There is a significant gap between demonstrating high performance in research and deploying a system in a production vehicle. On-vehicle environments impose simultaneous constraints: compute resources, power consumption, real-time requirements, safety design, redundancy, and quality assurance. For full autonomous driving to function as social infrastructure, the system must not merely work technically—it must continuously satisfy the prerequisites for long-term operation.

This is where partnerships with major automakers and suppliers become critical. Development targeting volume production raises requirements by a level: quality management, regulatory compliance, supply chain alignment, and integration with established development processes. There is a constant tension between research velocity and the deliberate pace required for mass production. Navigating that tension is itself one of the major challenges ahead.

At the same time, it is only through this collaboration that full autonomous driving makes the transition from experiment to production. Reaching the stage where our systems are integrated into actual vehicles—on both technical and business dimensions—is one of the central themes ahead.

The Road Ahead: Connecting GPU, Data, and People to Volume Production

The path forward is not simply about improving model performance. What matters is building full autonomous driving as a structure that can actually sustain itself. At the center of that structure are GPU compute, data collection, and the right people.

Running large-scale compute to build better models. Continuously acquiring high-quality data to sustain the learning and evaluation loop. Continuously building the team capable of designing and operating that system. These are not separate concerns—they are the core themes that must advance together to bring full autonomy closer to reality.

Beyond that accumulation lies the phase of integration into production vehicles. Through partnerships with major automakers and suppliers, clearing the barriers of on-vehicle implementation and quality assurance, and connecting to real-world deployment. The road is long and the challenges are significant. Yet when this transition succeeds, full autonomous driving becomes social infrastructure for the first time.

Turing will continue to confront the foundational prerequisites—GPU compute, data, and people—head-on, advancing full autonomous driving on both technical and business fronts.

Join us :

Take on the challenge of fully autonomous driving

with a diverse team of talented members

from various backgrounds.