Software Engineer (MLOps)

Role

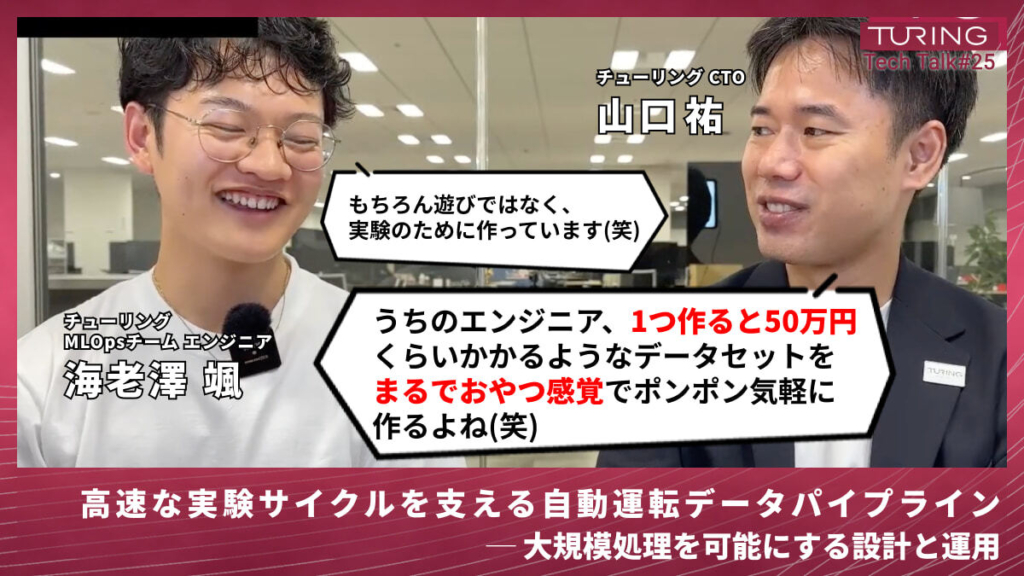

MLOps engineers at Turing build the infrastructure and systems that enable ML teams to move fast. Their mission is to dramatically improve the efficiency of ML model development through Turing’s data-centric AI approach.

Concretely, you tackle the highly challenging problem of scaling data volume while maintaining data quality. You build and operate large-scale data pipelines for unstructured data—LiDAR, cameras, GNSS, IMU—automating and accelerating them using cloud infrastructure, creating the robust foundation that enables ML engineers to rapidly advance model development.

What You’ll Do

- Collaborate with ML engineers to continuously improve data and models

- Automate processing using cloud infrastructure; implement internal tools and services

- Design system architecture for large-scale data pipelines

What We’re Looking For

You have strong software engineering fundamentals and understand large-scale production systems deeply. You’re comfortable with large-scale data processing, workflow orchestration, and infrastructure automation. You think systematically about reliability, scalability, and data quality.

You understand machine learning workflows and can engage deeply with ML teams about their needs. You balance engineering rigor with the exploratory nature of research. You communicate clearly with cross-functional teams and take ownership of system reliability.

Tech Stack

- Orchestration systems (Kubernetes, workflow schedulers)

- Data processing frameworks (Apache Spark, Ray, etc.)

- MLflow or similar model management systems

- Python and infrastructure automation

- Cloud infrastructure and container technologies

- Database and data storage systems

What Makes This Role Special

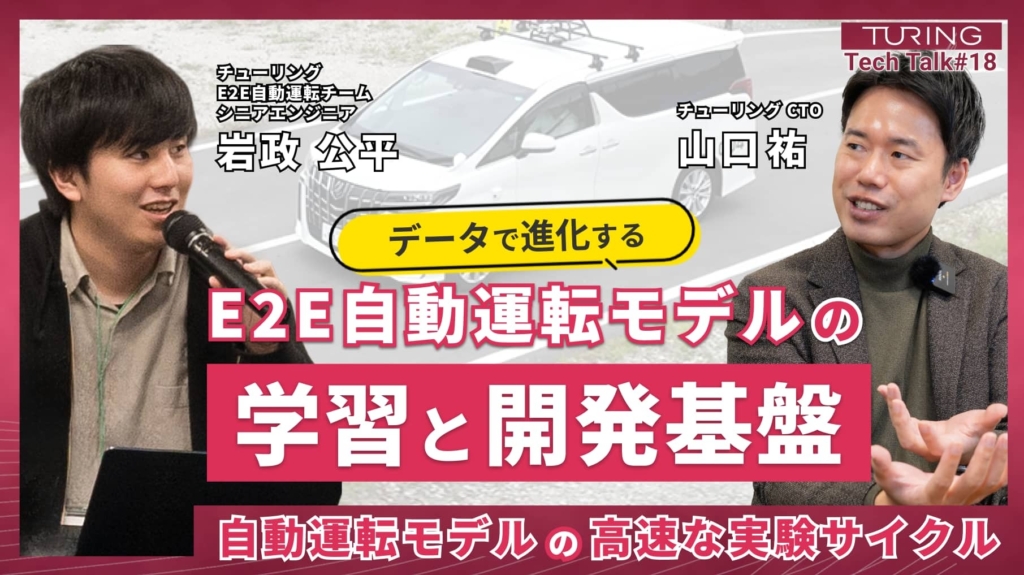

The defining advantage of working as an MLOps engineer at Turing is the unparalleled experience of building large-scale data pipelines for unstructured data—LiDAR, cameras, GNSS, IMU—while working on a real-world technology that directly impacts how the world moves. You’ll be involved in designing and developing infrastructure that processes terabyte-scale data.

Building data pipelines for unstructured data is a technical domain with few established references or best practices. That means you’ll forge your own path—creating novel solutions to novel problems, without the guardrails of a well-trodden playbook.

Your mission is to simultaneously maintain data quality and scale data volume—the two hardest things to do at once in data-centric AI—while dramatically improving the efficiency of ML model development. By automating and accelerating data processing through cloud infrastructure, you’ll support the foundation of the company’s core technology. You’ll see the direct impact of your work on the technology that will define the future.

Key Qualifications

- Experience with large-scale software development

- Experience building and operating ETL (extract, transform, load) infrastructure and workflow engines

- Experience with data cluster technologies such as Hadoop or Kafka

- Experience developing high-access, high-traffic, low-latency large-scale services

- Experience with large-scale ad serving, video delivery, or recommendation system development

- Interest in automating and accelerating infrastructure and data processing through cloud services

- Interest in building data pipelines for unstructured data (LiDAR, cameras, etc.)

- Motivated by the challenge of maintaining data quality while scaling data volume

- Energized by creating solutions in technical domains without established references

- Driven to dramatically improve ML engineer model development efficiency as a core mission

Cross-Functional Collaboration

With Infrastructure Engineers (GPU Cluster)

You’ll define requirements and coordinate the execution environment (cloud and on-premises) for the large-scale data pipelines MLOps builds. The key challenge is reducing data transfer time from cloud-based pipelines to on-premises or alternative cloud training environments, and building mechanisms to transfer data with integrity. This directly impacts the velocity of autonomous driving development iterations.

Working on infrastructure with specialized requirements—large-scale batch processing compute—you’ll collaborate with the infrastructure team to solve domain-specific technical challenges. Optimizing data transfer across multiple cloud environments underpins the entire speed of autonomous driving development.

With Software Engineers (Autonomous Driving Systems)

You’ll coordinate on how data is written from real-world devices (sensors), and the format and content of that data. When errors occur at the pipeline’s upstream source—or data isn’t written in the required format—you’ll work together to identify root causes and implement changes to data output formats and mechanisms.

This is an opportunity to grapple with the fundamental challenge of how to efficiently acquire and process real-world data in a form usable for model training. The quality and format of data at the device output level determines downstream model training success.

With ML Engineers

You’ll collaborate across the entire data flow needed for ML model development: dataset creation and improvement, curation pipeline construction, and data processing/transformation acceleration. The focus is on pipeline improvements to satisfy ML engineers’ dataset requirements.

This is an opportunity to tackle the technically demanding challenge of high-speed processing of large-scale unstructured data. Solving this problem enables ML engineers to rapidly run the experiments they want to run, flying the entire development team’s productivity dramatically higher.

Join us :

Take on the challenge of fully autonomous driving

with a diverse team of talented members

from various backgrounds.